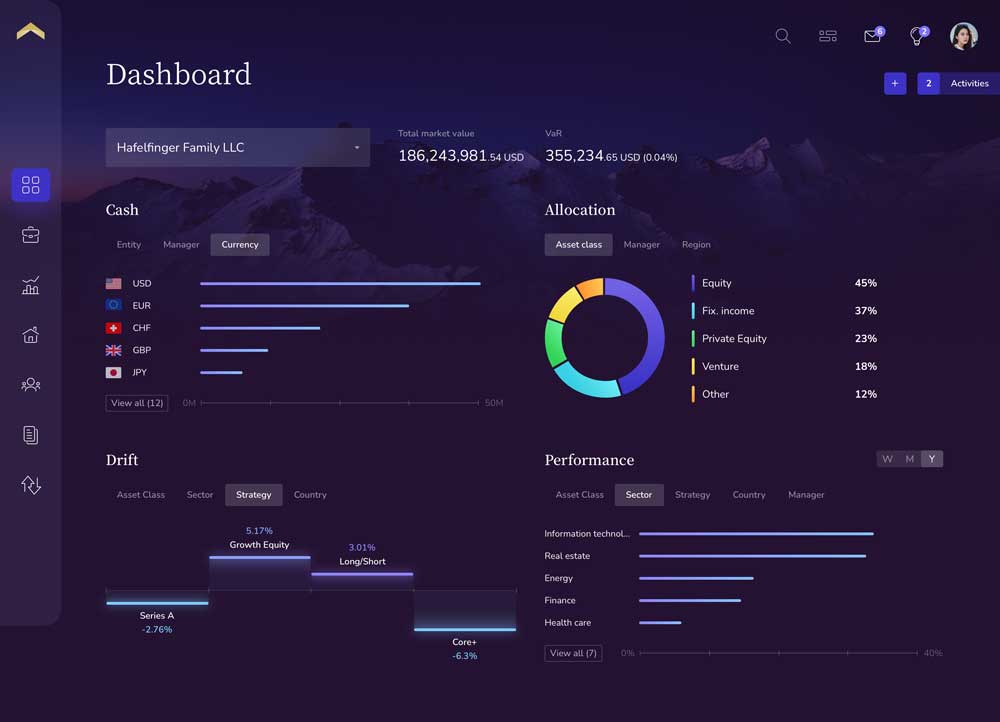

Analyzing and managing sophisticated investment portfolios for high net worth investors requires data.

One of the greatest challenges for the financial services industry is capturing, correcting, calculating and presenting complex data from multiple silo’d platforms in an automated fashion without sacrificing data accuracy, speed or operating efficiency.

During bull markets most investors and investment advisors take data for granted, opting to use charts and graphs for directional guidance as opposed to validating the data behind the visuals.

However, during market volatility data accuracy becomes the most important factor, especially in the high net worth and ultra-high net worth (UHNW) segment where basis points matter, and errors erode trust. During the 2008 financial crisis I watched many family offices and UHNW investors, who before the financial collapse were very vocal that data accuracy and consolidated analysis was ‘nice but not necessary’, literally take the time to tick-and-tie every line item on reports that were sometimes over 100 pages long.

When markets fall up to 10% in a given day sophisticated investors demand instant oversight and understanding as to what investments, which managers, and what themes or strategies drove the losses or generated gains…..and they don’t tolerate errors.

Unfortunately, I have seen many situations where some of the largest and best-known financial software systems produce errors that have ranged from over $22.6 million to over 30 percentage points – not basis points but percentage points.

Errors of this magnitude are amplified in the family office segment, especially when those errors overstate liquidity forcing principals to liquidate investments when the market is already down 10% or more.

The data problem

The first part of the data problem starts with knowing what information to capture and how to capture it. Historically firms relied on account aggregation, either manual or aggregation software platforms.

If you rely on manual aggregation you will require an increasing number of people to enter the information which drives up costs, increases delays in reporting, is often not compliant, and usually produces errors.

I recently worked with an endowment that was aggregating manually with their internal accounting team and in a 45 day period the internal team’s total errors were over 13% of the total market value of the fund.

The second option is relying on third party account aggregation software platforms. Many of those aggregators rely on screen-scraping technology that requires individuals to provide personal login credentials to each of their banks. The challenge with screen scraping is it consistently produces data errors, remains a serious security and privacy threat, and can’t support complex investments. Several CEOs at the top private banks have publicly stated their ‘war’ on screen scraping technologies. There are a few aggregation platforms that have taken the time and capital investment to build and maintain direct data feeds to major custodial platforms. However, although many people view direct feeds as the answer the fact is data feeds are simply a delivery mechanism and is largely irrelevant because it’s the data in the feed that matters most, not the feed itself.

For example, one of the largest data aggregation platforms in the private wealth industry only supports an average of (20) twenty transaction codes per data feed. When a single asset class can have over (30) thirty transaction codes only supporting 20 codes in total means a lot of data will be omitted from the feed which allows for material data errors.

Another key factor is whether or not the feed supports trade date information for all banks or only settlement date data which will lead to errors in liquidity, exposure, and performance. How a system supports various cash flows for private investments is also important. One example is the majority of systems can’t support a return of capital correctly and treats this as a dividend, which is income, as opposed to correctly adjusting the cost per lot or contribution on a pro-rata basis.

Also knowing if your system supports non-unitized securities is important, especially for people who invest in private equity, venture, and direct private investments.

If your system doesn’t support non-unitized securities it will have to create, what the industry calls, phantom price and phantom shares. If you speak to any operations person at a large private bank they’ll tell you creating phantom price/shares results in serious data errors.

Understanding the importance of data is critical in managing sophisticated wealth. Of equal importance is understanding how your current or potential software solution will fix or exacerbate the problem leading to investment decisions that could very well materially effect the preservation of multi-generational wealth.